The promise of artificial intelligence has been around for almost a century now. Scientists and experts have been claiming that true AI is just around the corner, with it being a perpetual 25 years away. While we might not have reached the level of true AI yet, or get there within our lifetime, there have been some incredible advances in the field. This is particularly evident in the area of natural language processing (NLP).

The Dawn of Large Language Models

NLP is a branch of AI that deals with the ability of computers to understand and process human language. This is a complex task, as human language is often ambiguous and can be interpreted differently. However, recent advances in NLP have seen some incredible results, with computers now able to understand and respond to human language in realistic ways. This is a significant step forward in the field of AI, and it is hoped that it will lead to further advances in the future.

One of the most impressive achievements in NLP has been the development of large language models (LLMs) that can achieve few-shot or even one-shot learning. This means they can be taught a new task with just a few or even just one example.

This is a massive breakthrough because we can now create automated software using nothing but natural language to specify its function - no coding required. For example, with a single sentence, one can now create software automatically translating text from one language to another or generating new text based on a few examples. This opens up a whole new world of possibilities for NLP applications, as they can process a wide variety of potential user input, quickly adopting new functions and features based on situational demands.

Next-gen virtual assistants

Great news for applications relying on voice search, whether Siri, the Google Assistant or their next-generation equivalents! Right now they struggle with interpreting and understanding user queries that do not follow specific command structures, making them limited in usefulness. Still, with the flexibility afforded by LLMs, literally any input could result in helpful output for the user.

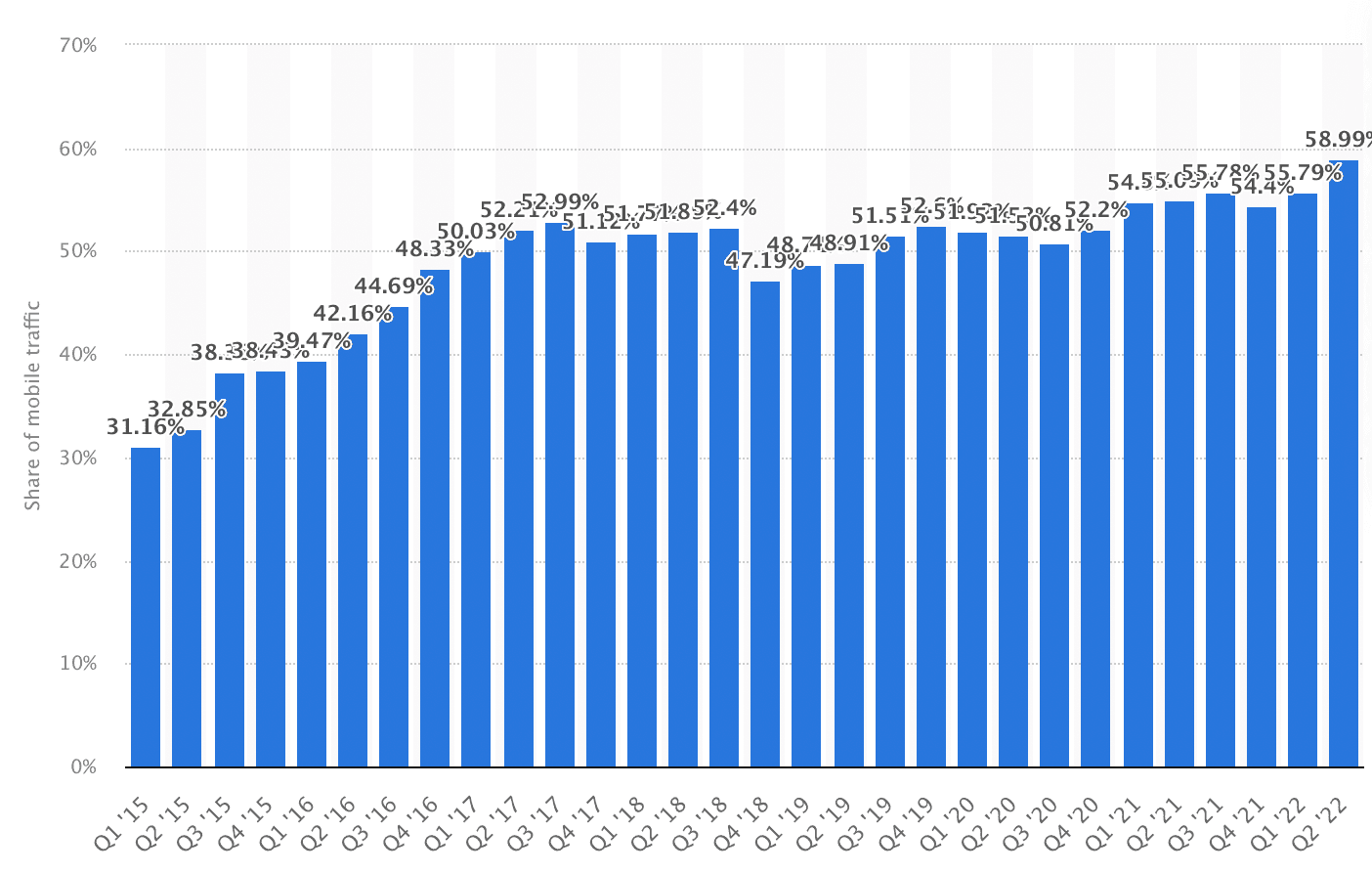

To put the importance of this market sector in perspective: Despite its serious limitations, Google has stated that voice search with the Google Assistant makes up for 30% of all queries in some countries. This is likely because voice search is faster and easier than typing out a search query, especially on mobile devices. As mobile device traffic now makes up a majority share of online traffic worldwide (60%, rising at an average annual rate of approximately 1%), we can expect voice search and virtual assistants to increase in importance going forward too. This development might also benefit from a shift towards AR and VR, whether in the form of the Metaverse or similar, which foregoes keyboards and traditional input methods with buttons and keyboards, also putting voice commands front and centre.

Figure: Increasing share of mobile online traffic as a percentage of total online traffic (worldwide).

Figure: Increasing share of mobile online traffic as a percentage of total online traffic (worldwide).

The end of graphical user interfaces?

Indeed, if LLMs can deliver on what current capabilities suggest, then the flexibility they afford would completely outshinedwarf anything which is theoretically possible with graphical user interfaces (GUIs). After all, GUIs only allow for select inputs in a clearly defined manner. If there is no button to press for a specific function, the user wanting a button to exist will not make it appear. However, with a sufficiently complex LLM, the user just voicing the request will, as long as it’s theoretically possible, yield an outcome and likely the desired one.

This considerable advantage of LLMs could mean a paradigm shift: Just like we saw a move from terminal command-style OS interactions to graphical user interfaces in the 1980s due to their lower learning curve, we might see yet another shift away from GUIs to us just speaking to our devices as if they were another human due to the heightened flexibility this approach affords. We’d request information, dictate notes, tell them to write an entire email or article for us with nothing but a few prompts (they’d fill in all the gaps or suggest an entire email up-front without us having to lift a finger!), and we’d follow their advice on how to reach the best Italian restaurant, cook a healthy meal, or listen to them correcting our form while working out based on input from smart devices.

No matter what the future holds, at this point we can be sure that our interaction with next-generation software will feel a lot more human. Reach out to the Pomegranate Team to chat about how we can help you prepare for the next-gen.